Goldman Sachs may have over $2 trillion in assets under supervision. But in its IT engine room, like many large organisations, the investment bank relies heavily on free and open source software (OSS) as well as some aging yet still widely deployed commercial databases -- including to help it serve internal analytics dashboards.

Internally its staff often need to analyse up to terabytes of data sitting in on-premises relational databases -- using a mixture of tools including data visualisation software Tableau for canned reports, open source data modeling platform Legend for custom explorations and ad hoc SQL queries to debug production issues.

In an overlooked yet insightful blog the bank recently detailed the critical OSS tool it has deployed to help tackle query latency. (Goldman Sachs has also spun up a range of its own open source tools like database deployment software Obevo, open sourced under an Apache 2.0 license in 2017 to help developers manage database schema definitions for new and existing sytems under a a standard software development lifecycle or SDLC approach.)

Sybase IQ queries were getting sllllllllowwwwww....

The bank is a big user of the still widely loved Sybase IQ relational database server (now owned by SAP), with two multiplex databases being used in a live-live mode; each using a hybrid cluster architecture that involves both shared and local storage -- the latter used for catalogue metadata, temporary data, and transaction logs.

As Goldman Sachs engineers Karan Ahluwalia and Jack Griffith note however: "If you have worked closely with self-hosted/non-cloud databases, you will likely be familiar with the challenge of upgrading, load balancing, and failing over relational databases without having to touch client applications... we work extensively with relational databases which do data processing, batches, and UI connect via JDBC (TCP layer). Some of these databases do not provide a suitable load balancing and failover mechanism out of the box that works for our use cases.

"The best solution would be to place the database behind a DNS layer and have the applications connect via the DNS. However, this can cause problems when the port of the database needs to change. Changing the port or the host requires extensive coordination and testing efforts across multiple client applications. The level of effort is directly proportional to the nature/breadth of the database usage. Our databases are used extensively, so changing ports and testing them is not very efficient or feasible. Thus, in order to improve resource utilization, save time during database failovers, and improve developer efficiency, we looked into using HAProxy..."

What is HAProxy and how does Goldman Sachs use it?

HAProxy is an "event-driven, non-blocking engine combining a very fast I/O layer with a priority-based, multi-threaded scheduler" as its Github ReadMe puts it. For those to whom that is a mystifyingly inadequate explanation, it is better explained as a widely used software load balancer -- first born sometime back in in 1999.

(Load balancers help performance, availability, and redundancy by allocating work across servers to reduce the load on each machine, helping users handle more traffic and do maintenance, upgrade software, deploy websites, or swap out servers without disrupting service to the army of analysts wanting reports...)

The Goldman engineers said the bank needed its Sybase IQ cluster to serve queries with high availability but due to applications being tightly coupled with the reader nodes most of the load was being directed to one node.

"To cope with each query, Sybase IQ tries to provide the maximum amount of resources to each query, and as a result, any subsequent queries will need to wait until the first query is completed. Sybase IQ does not have built-in load balancing or failover, leading to saturated hosts and errors if there are too many queries" they said, adding: "If the primary reader nodes go down, the time it takes to point to another node may impact our SLOs..."

The bank's engineers chose to install HAProxy in TCP mode and redirect everyone wanting to querty Sybase IQ to come via HAProxy itself: "The readers will behave as if they are connecting to the Sybase IQ database directly and quickly using the existing JDBC client driver (JConnect or SQL Anywhere), but in reality, the HAProxy is in between, sorting the queries to available nodes... We set up a cluster of HAProxy instances to make it fault tolerant as well. These instances are brought up on UNIX boxes. A simple gateway sits in front of them to ensure high availability in case one of the instances go down. When we went live with the static configuration, HAProxy was unable to understand the load on the Sybase IQ reader nodes. This meant that our engineers needed to manually change the weight of the nodes to ensure that the traffic did not hit the impacted nodes".

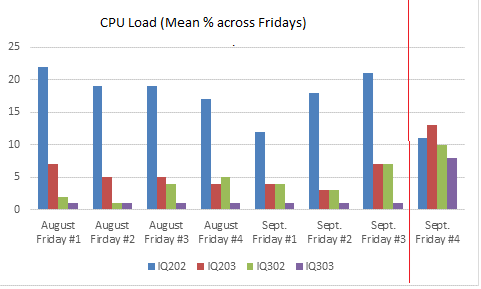

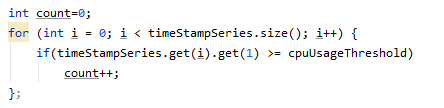

The technologies detailed are hardly groundbreaking (Sybase IQ has been around since ~1995; HAProxy since ~1999) and their deployment pragmatic and effective rather than cutting edge Yet as a sneak peak behind the scenes at the on-premises plumbing keeping one of the world's biggest investment banks humming along, arguably deserve attention. Goldman's engineers have cron jobs running to continuously monitor the state of the nodes and change the weights in HAProxy via the configuration socket -- these look at the CPU and RAM usage of the Sybase IQ reader nodes along with the number of queries that are already running to identify the load factor.

"Based on the load factor, we hit the stats socket (port 9999) to update the weights of each node. As an example, [left] is a snippet of the code – we are looking for 5 consecutive occurrences of CPU over 95% which highlights CPU flatlining and thus the factor of the load on the reader node."

The team has now cut recovery time for a primary node failure down to "a few seconds" -- within their SLOs -- as well as performed stress tests on HAProxy clusters and found that just "1 in every 1000 requests/queries can have an additional latency of 5ms by just forwarding it through HAProxy, which is negligible... As part of this exercise, we also started capturing the read queries and their response times on our cluster. Out of the total set of queries fired on our cluster during the time range, 64% queries ran with sub-second response times and 98% queries ran with sub-minute response times. Also, no queries were impacted due to cluster availability issues. Going forward, we plan to take a closer look at the data model to achieve sub-second response times for 99% of our queries."

Its a pragmatic approach but also a reminder that those hoping hoping to encourage such enterprise behemoths onto SaaS data warehouses still have some huge and entrenched workloads to peel away...