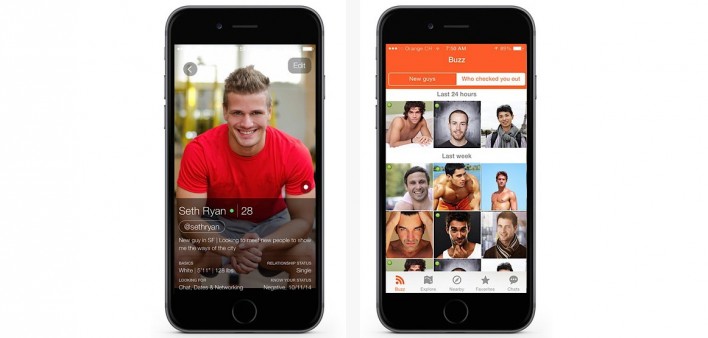

Hornet is a social network and app for the gay community that has attracted over 30 million members. Founded in 2011 and the most popular gay app in Brazil, France, Russia, Taiwan, and Turkey, Hornet has also grown rapidly in the US and Europe in recent years. That success put real pressure on its decade-old infrastructure, leading to sustained efforts by the Hornet team in recent years to modernise its architecture.

Those growth pains meant that Nate Mitchell, Lead DevOps Engineer at Hornet, was often left prioritising the things that were the “most on fire” rather than carving out time to focus on how to apportion compute resources more efficiently or deliver new features, as he put it candidly to The Stack in a recent interview.

With Hornet wanting to improve how it served the community around the app, including by expanding into new services and new features, the team set about taking a rigorous look at how some of the app’s most popular features functioned, before updating its cloud-native infrastructure to innovate further and drive down TCO.

Cassandra? "We left it alone for a long time..."

One of the last things on Nate Mitchell's list was updating the distributed database Apache Cassandra.

Cassandra is famous for being one of the most resilient databases out there, and “apart from the very occasional misadventure, Cassandra was several years' worth of not-being-on-fire,” Nate noted. “It just worked, and it underpinned a critical service, so we left it alone for a long time.”

Hornet uses Cassandra to underpin its private messaging function and users’ activity feeds, storing and archiving activity in the open source database. The application’s core API, meanwhile, is powered by AWS EC2 – “we can run up to 150, 180 instances of EC2 easily during periods of high activity,” Nate adds.

Everything requires a little love at some point, however.

As Nate puts it: “Eventually our Cassandra cluster started misbehaving at times with no apparent reason as to why. We found that at some point in time, someone had attempted to run a repair against our Cassandra classes, which had left a whole bunch of bits and pieces all over the place of half-repaired data. That seemed to be causing real issues and it was time to update our approach.”

I get by with a little help from my friends

At the same time, the growing demand placed on messaging by Hornet’s rapidly growing user base meant it was time to make some changes: “When we discussed our issues with the [open source] Cassandra community, there was some consistent feedback on talking to the team at DataStax”, says Nate.

“The team there has supported some of the largest implementations worldwide, so they have a lot of experience in dealing with issues like ours. They were extremely helpful. We worked closely with one of their engineers to basically build out a script that would go through all of the various records to clean them up, update the newsfeed cluster and then the messaging cluster in staged phases. Since we followed that approach, stability has been infinitely better and we have moved up to the latest version.”

See also: DataStax on evolving enterprise data strategies, a new CEO, and its renewed commitment to open source

The result was a production system that was up to date, but it also delivered real efficiency savings: “With help from DataStax, we went from around 60% capacity on our messaging cluster to just under 30% capacity,” says Matthew Hirst, Head of Server Side Engineering at Hornet. “That is a huge efficiency gain.”

With fires put out and significant savings made, the Hornet technology team is now looking to further modernise, including the use of Kubernetes for its application stack. Matthew and Nate have already looked at the work that DataStax is doing around bringing Cassandra and Kubernetes together in the K8ssandra open source project.

“We think that everything will be based on Kubernetes, so we are evaluating our approach here as well. The fact that DataStax is supporting this with its open source K8ssandra distribution is great for us, and we are looking forward to exploring how this might help us in future,” says Matthew.

As Nate put it to The Stack: “I'm a big fan of Kubernetes. At the moment, if I'm building a whole bunch of custom orchestration stuff, with monkeys pulling levers on ECS, when the next guy comes in, I've got to teach them how to do all that. If I do everything on Kubernetes when the next guy comes in, I just need to say ‘hey, do you know Kubernetes?’ That really simplifies the longevity of a project..."

"At the moment", he adds, "it’s very much a 2012-2014-era [setup] of AWS trends of auto scaling groups and load balancers and launch configurations. This works, but it still limits you to a situation where you have a single application server running on a single VM as opposed to apportioning the compute resources more efficiently.”

With the core database now running seamlessly however and Hornet having been able to achieve a 50% reduction in the volume of data it has to store too, thanks to those optimisations and a more modern version of Cassandra, Nate’s keen to start looking at how he can establish a more modern stack.

“I intend to move to Kubernetes in the near future”, says Nate, “although it’s a massive undertaking as we have legacy code going back to 2011, so there is a lot of work to be done.” He adds: “I’ve written Terraform scripts that can themselves spin up a Kubernetes cluster and configure it, which probably made my life more agonising than it needed to be. But now I’ve got repeatable reusable code that I can then throw at the next thing to ‘Kubernetify’.”

The last result from the project? Nate and Matthew have been able to expand the messaging service to deliver more content to users. This supports how the company is expanding its community and group features, encouraging more interaction and longer sessions. From looking specifically at data management, the team at Hornet now enables the company’s long-term goals around messaging and community development.

Delivered in partnership with DataStax.