In late 2020 AstraZeneca open-sourced a “production-ready” machine learning tool called REINVENT for drug discovery projects on GitHub under a highly permissive Apache 2.0 open-source licence, in a landmark move.

The use of Artificial Intelligence (AI) and Machine Learning (ML) to power drug discovery has attracted huge interest – and considerable venture capital – over the past decade and the tool’s open-sourcing was unusual. (The pharmaceutical sector generates over $1 trillion in annual revenues and innovations are closely guarded.)

“We aim to offer the community a production-ready tool for de novo design” said the team behind REINVENT (which uses PyTorch as a deep learning engine and reinforcement learning to identify novel active compounds).

“It can be effectively applied on drug discovery projects that are striving to resolve either exploration or exploitation problems. By releasing the code we are aiming to facilitate research on using generative methods on drug discovery problems [and hope] it can be used as an interaction point for future scientific collaborations".

Visit the REINVENT GitHub repository – where the codebase for this toolkit is available – in 2022 and it has just four contributors and 234 stars. Using AI for drug discovery is still not an obviously public nor open source activity (although The Stack found more collaboration than many might expect) and some of the challenges will be familiar to many digital leaders outside this space; from change management to data accessibility and quality...

AI and drug discovery: Parsing the hype

It takes between 9.5 and 15 years on average to develop one new medicine, from initial discovery through regulatory approval, and costs ~$2.6 billion, with a tiny number of drugs in development making it to market.

Hype around the ability of AI and ML to transform this process has been pronounced in recent years.

So why are even the freely available production-ready tools apparently generating little uptake? What are the biggest challenges to the use of AI and ML in drug discovery? Is high quality training data widely available? What kind of cultural and organisational challenges does wider use of AI in drug discovery raise for the sector?

The Stack set off to find out more – and where better to start than with REINVENT co-creator Dr Ola Engkvist; Head of Molecular AI at AstraZeneca and Adjunct Professor in AI and Machine Learning-Based Drug Design at Chalmers University of Technology.

He told us that although the repository may look quiet, the tool had indeed borne fruit.“REINVENT is now used in 2/3 of our internal small molecule projects and have contributed to several milestone transitions for projects where it has been applied.

"Our work with deep learning-based molecular de novo generation including REINVENT has led to several very interesting academic collaborations. An example is a collaboration with Alto University in Helsinki about Human-In-The-Loop ML".

REINVENT uses reinforcement learning to help identify novel active compounds and “facilitate the idea generation process by bringing to the researcher’s attention the most promising compounds” using a generative model capable of generating compounds in the SMILES (Simplified Molecular Input Line Entry System) format.

AI and drug discovery: data remains a challenge

Scientists say that such machine learning models have "emerged as a powerful method to generate chemical space and obtain optimized compounds", but that they can be time and computational resource-heavy.

The AstraZeneca team continues to refine its efforts in a bid to tackle the need for "multiple interactions between the agent and the environment" in reinforcement learning -- and asked what the main challenges that AstraZeneca encounters, Dr Enqvist tells The Stack that “in drug discovery, I would say historically the bottle neck has been the cost of data generation” – adding that “this is currently changing rapidly.“

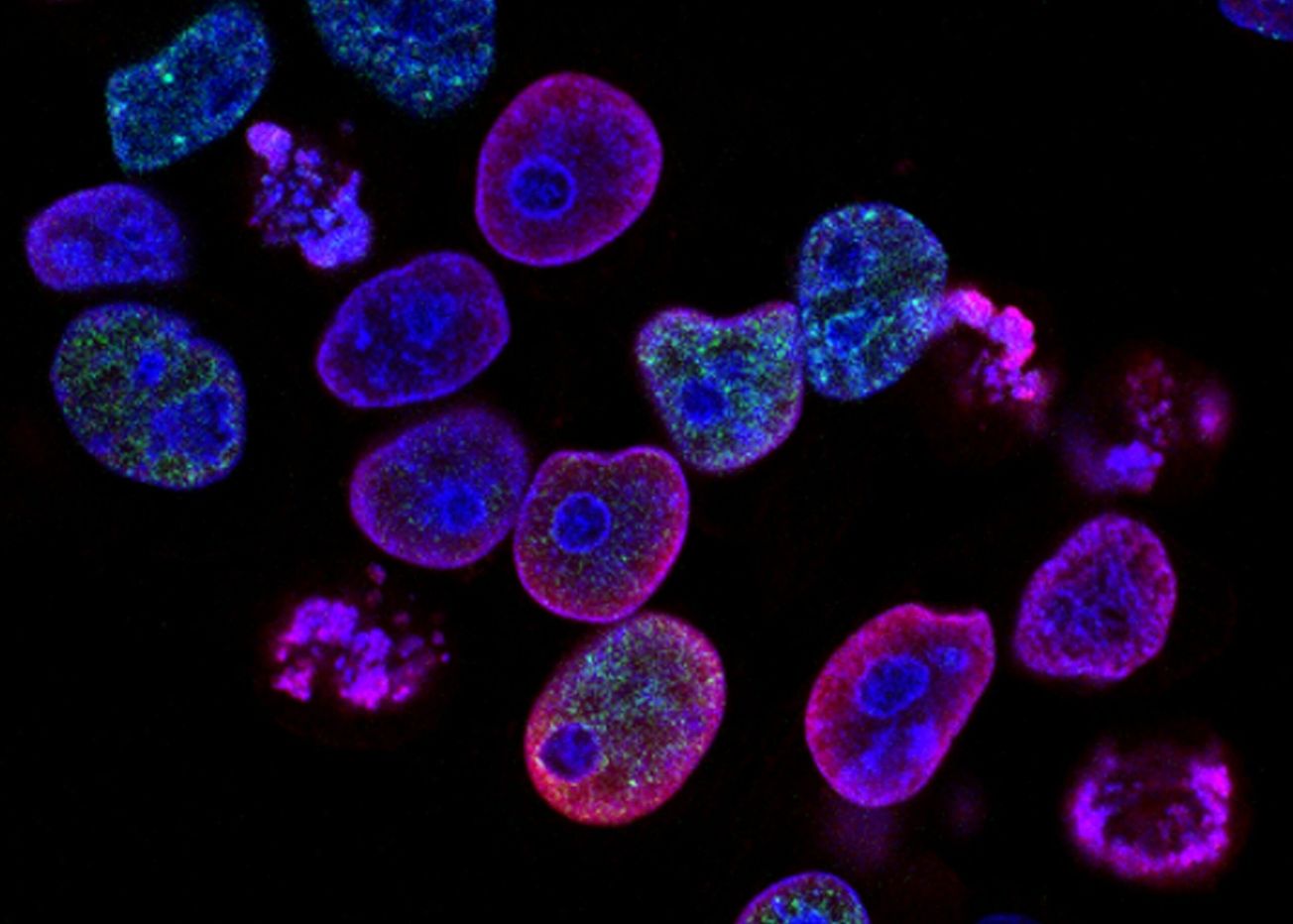

"Large data sets suitable for ML/AI can now be generated much more cost efficiently,” he suggests, with examples including “high-throughput experimentation technologies for chemical reaction data generation and cell-paint imaging technology for studying morphological changes of cells perturbed by a compound.”

Data generation

When it comes to AI and drug discovery (an expansive term) much ongoing work takes place using preclinical data – data from research that takes place before human clinical trials. Here, this is complicated both by the fact that complex biology is context-dependent and that data can be hard to attain.

“The issue isn’t data shortage, it’s data accessibility. Most high-quality data relevant for drug discovery is siloed within pharmaceutical and CRO [clinical research organisation] companies, particularly those who have been able to generate proprietary data for decades,” says Dr Tudor Oprea, VP of Translational Informatics at Roivant Sciences, which owns a family of biopharmaceutical companies.

This issue often forces startups and smaller companies to use publicly available data, such as from peer-reviewed reports, which can often be of poor quality, as our other interviewees emphasise. (Oprea adds that the benefits of AI deployment go beyond improving the pace of drug discovery. AI can also "reduce the number of animal experiments [and] reduce usage of hazardous chemicals with focus on sustainability and ‘green chemistry’" he says.)

“The problem,” says Dr Vladimir Makarov, an AI Consultant at Pistoia Alliance, “is that such data are typically treated as high-value confidential assets that bring competitive advantage to the individual companies.”

Dr Makarov describes the amount of available high-quality data for specific health conditions as “dismal".

"The importance of high-quality data cannot be overemphasised" he tells us: "At the same time, the industry must admit that the typical peer-review process of today does not result in publication of high-quality data. As a result, both reproducibility of research, and progress in AI and ML, which are particularly sensitive to data deficits and biases, suffer. Moreover, the discovery of life-saving treatments is delayed."

The Pistoia Alliance is a non-profit members’ organization formed in 2009 by representatives of AstraZeneca, GSK, Novartis and Pfizer. The Alliance is dedicated to broadening collaboration in the life sciences and now includes 17 of the top 20 global pharmaceutical companies by revenue as members.

Responding to a series of questions emailed through by The Stack, Dr Makarov cites two cases of recent research where relying on publicly available data for meta-analysis yielded unsatisfying results.

“In the Oral Squamous Cell Carcinoma case, out of over 1200 samples referenced in historic publications only about 300 were found to be suitable for training of a machine learning model. In the Clostridium difficile infection case, upon review of 27 published data sets only four were found to be of sufficient quality for further research, and the count of subjects shrunk from thousands to low hundreds” he says.

Makarov says that the low-quality output of data derived from the peer-review process is something the industry “must admit.”

Data availability is "dismal"

The frustrating reality is, when companies are prepared to share their data sets, the potential for rapid discovery rates is massive. A prime example was the Pistoia Alliance’s data-thon event held in 2019.

A group of volunteer scientists were permitted to use data and software provided by Elsevier, a member company of the alliance, to find a way to repurpose a drug for chronic pancreatitis.

In under 60 days, the participating researchers “quickly identified four lead compounds possibly suitable for repurposing for chronic pancreatitis treatment – one of which is now in clinical trials,” Makarov explains.

This timescale would be even shorter if the volunteers weren’t working reduced hours around their day jobs and other commitments. Makarov calls the results of the project “unprecedented” and asserts that without Elsevier having been willing to share data for the hackathon, it would have been impossible.

"You need to understand the depth of the data"

Novartis is among the large pharmaceutical firms that has been working to improve data availability, including simply for its own research scientists. (Its data has been highly fragmented and siloed.)

Since 2018, the firm has been collating and digitising two million years of anonymised patient data as part of a programme called data42. The project allows AI and natural language processing (NLP) technology “to sift through these mountains of data to surface previously unknown correlations between drugs and diseases,” writes Jane Reed, director of life sciences at Linguamatics. According to Dr Gerrit Zijlstra, Chief Medical Officer at Novartis UK, “data42 is now available to all our researchers and is a real opportunity for drug discovery.”

However, data42, like many other projects combining AI and drug discovery, is still in its early stages and not without a variety of complexities (despite comprising over 20 petabytes of data, or the equivalent to around 40,000 years-worth of music on an MP3 player, as Novartis’ Director of Campaigns Goran Mujik puts it.)

“In fields like finance, this type of exercise has already been done. With clinical trial data, it is very different and much more difficult,” notes Dr Doerte Seeger, Business Excellence Head at Novartis UK, responding alongside the Novartis CMO in emailed comment to The Stack. Data cleaning, curating, and quality control remain major obstacles in the way of actual progress, she says.

As Novartis CMO Dr Zijlstra tells us: “The challenge is that we need data scientists in the healthcare system to help make sense of the data and ensure its quality for the best use. In oncology, for example, many medicines require testing for certain mutations. This testing needs to be identified through large data sets.

In 2019, Novartis announced a five-year collaboration with the University of Oxford’s multidisciplinary Big Data Institute to improve the efficiency of drug development. As the timescale suggests, this transformation will not happen overnight. “You need to really understand the breadth and depth of the data, including where and how it sits, and how to access it with the right governance, intent, and purpose,” Dr Zijlstra confirms.

Whilst data quality remains a challenge, advanced computational fire power is helping a lot when it comes to AI and drug discovery -- and hardware and compute providers are keen to contribute to the cause.

NVIDIA for example has contributed its own supercomputers to UK healthcare researchers. On top of this the cost of cloud computing is falling and the quality of their instances improving, according to Dr Niloufar Zarin, Associate Director of AI at drug discovery startup Healx. “Cloud providers are actually spending time researching how they can enhance the capabilities to help us do our research,” she says. However, training AI algorithms to work leads to an inevitable human bottleneck because AI-generated results need verification.

“We ask our pharmacologist to review them to see that they make sense,” Zarin notes. Through comparing disease profiles with their drug databases, Healx found a nonsteroidal anti-inflammatory drug that could be used to treat rare disease fragile X syndrome and has proceeded to clinical trials. Healx’s successful use of NLP in the rare disease domain shows that a start-up as much as the bigger firms can successfully harness AI for drug discovery.

As Dr Gurpreet Singh, Head of Applied Machine Learning at Bayer, emphasises to The Stack, when it comes to AI and drug discovery, it’s not just data or complex compute issues that matter, but experienced people.

He says: "Is [AI] going to revolutionise [drug discovery]? Yes. Is the hype justified? No... [The] drug discovery process is a very complex process. It's not just one umbrella term. [You can't just say] 'here's the data; we'll come click the button'. You have to understand your input domain... on the early discovery side, I will say, we are still trying to understand human biology. If you talk to any pharmaceutical company they will all say, 'I don't think we understand human biology completely even yet'. So that speaks to the complexity of environment that we are dealing with. So some of the things are well understood, but others will need data-in-motion as we go on."

He adds: "Things are definitely going to change and I'm a big believer. [With] AlphaFold [an AI system developed by DeepMind that predicts a protein’s 3D structure from its amino acid sequence] people never believed that the field could be modelled in some sense. I think this [field and set of tools will] greatly improve. And so we'll have separate AlphaFold 2-based models for every thing that we are working on in the discovery space. But this is a start of things. Is the change gonna come? Certainly, very certainly, I think in the next couple of years, we will see more efficient timelines in terms of drugs coming to the market. People will feel happier, because nobody is comfortable waiting 13 years for a drug... So I think what we need to really understand is, how do we apply this? This is a tool: How do I apply this to my problem, and make the things more efficient? [When] we have applied tools to every part of our process and then they all work in synchrony, that is when, we will bring the revolution. One tool in a process of drug discovery is not going to change the entire drug discovery process."

Industry hopes remain high that the pace of change is mounting and that more breakthroughs will emerge in the near future. As analysts at investment bank Morgan Stanley put it in July this year: "We anticipate an inflection point for the sector, driven by data readouts from drug trials over the next two years."